Introduction

The Digital Personal Data Protection (DPDP) Act, 2023 introduces parental consent (“consent-gating”) as the primary safeguard for children’s access to digital platforms, premised on the belief that parents are best positioned to protect minors’ privacy and well-being. This approach emerges against a backdrop where adolescents’ digital immersion has become near-universal, with smartphones acting as gateways to education, socialisation, and identity formation.

At the same time, rising concerns over screen addiction, algorithmic amplification, cyberbullying, self-harm content, and data exploitation have triggered regulatory anxiety worldwide. While empirical research shows consistent associations between excessive platform use and anxiety, depressive symptoms, and body-image dissatisfaction, especially among teenage girls, it also highlights that digital access itself is unevenly distributed, with gender, class, and geography shaping who benefits from the Internet.

Against this complex terrain, consent-gating seeks protection through restriction—but whether it safeguards children or deepens digital exclusion remains contested.

Body

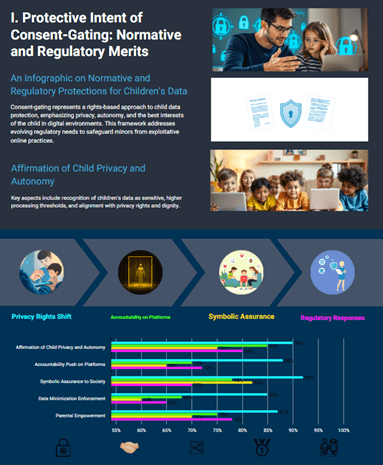

I. Protective Intent of Consent-Gating: Normative and Regulatory Merits

Affirmation of Child Privacy and Autonomy

- Consent-gating reflects a rights-based shift that recognises children as data subjects deserving enhanced protection, aligning with evolving interpretations of the right to privacy and the best interests of the child.

- Example / Case Study: Judicial recognition of informational privacy as intrinsic to dignity has encouraged lawmakers to treat children’s data as inherently sensitive, warranting higher thresholds of processing.

- Government Initiative: The DPDP framework complements child-centric cyber safety advisories issued through national digital literacy programmes, aiming to create safer online ecosystems.

Accountability Push on Platforms

- By mandating verifiable parental consent, the law implicitly pressures platforms to re-engineer default data practices, discouraging indiscriminate profiling, behavioural advertising, and opaque algorithmic targeting of minors.

- Example / Case Study: Global scrutiny of social media algorithms following whistleblower disclosures has shown how engagement-maximising designs disproportionately expose adolescents to harmful content.

- Government Initiative: Proposed digital competition reforms and sectoral guidelines seek to curb exploitative data practices, reinforcing the protective logic behind consent-gating.

Symbolic Assurance to Society

- In periods of moral panic following child-related tragedies, consent-gating offers a visible regulatory response, signalling state concern for child safety and parental authority.

- Example / Case Study: Comparative experiences from countries experimenting with age-based restrictions illustrate how such measures can temporarily restore public confidence, even if enforcement remains imperfect.

- Government Initiative: National child protection frameworks increasingly integrate digital harm as a recognised risk category.

II. Structural and Practical Limitations: Why Protection Often Fails in Practice

Illusion of Compliance and Technical Porosity

- Adolescents routinely bypass age and consent checks through false declarations, shared credentials, or VPNs, reducing consent-gating to a procedural hurdle rather than a substantive safeguard.

- Example / Case Study: Jurisdictions with strict age-gating have witnessed migration of minors from moderated platforms to encrypted or unregulated spaces, amplifying exposure to grooming and extremist content.

- Government Initiative: Cybercrime monitoring units have flagged enforcement challenges arising from anonymity and cross-border data flows.

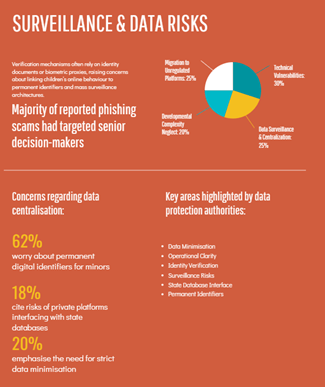

Risk of Surveillance and Data Centralisation

- Consent verification mechanisms often rely on identity documents or biometric proxies, raising concerns about linking children’s online behaviour to permanent identifiers.

- Example / Case Study: Debates around digital identity integration have highlighted risks of mass surveillance architectures, particularly when private platforms interface with state databases.

- Government Initiative: Data protection authorities have emphasised data minimisation, yet operational clarity on age verification remains limited.

Neglect of Adolescent Developmental Complexity

- Consent-gating assumes uniform parental capacity and benevolence, overlooking contexts where parents may lack digital literacy, time, or awareness of online risks and benefits.

- Example / Case Study: Mental health research shows that for many adolescents, especially those facing stigma or isolation, online communities provide crucial peer affirmation and emotional support.

- Government Initiative: School-based digital education programmes remain uneven, limiting complementary safeguards beyond consent.

III. Digital Exclusion as an Unintended Consequence

Gendered and Socio-Economic Disparities

- In societies with entrenched patriarchy, parental consent requirements can translate into blanket denial of access for girls, reinforcing pre-existing digital gender gaps.

- Example / Case Study: Household-level studies reveal that when Internet use is policed, devices are more likely to be confiscated from adolescent girls than boys.

- Government Initiative: Digital inclusion missions aim to expand women’s connectivity, yet consent-gating risks undermining these gains at the household level.

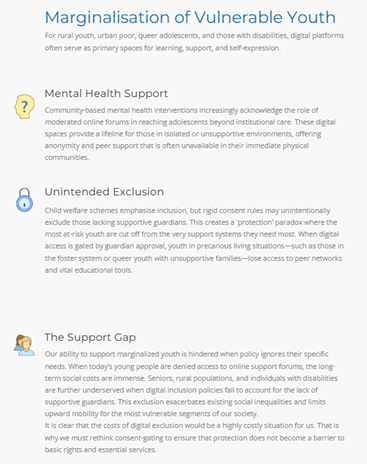

Marginalisation of Vulnerable Adolescents

- For rural youth, urban poor, queer adolescents, and those with disabilities, digital platforms often serve as primary spaces for learning, support, and self-expression.

- Example / Case Study: Community-based mental health interventions increasingly acknowledge the role of moderated online forums in reaching adolescents beyond institutional care.

- Government Initiative: Child welfare schemes emphasise inclusion, but rigid consent rules may unintentionally exclude those lacking supportive guardians.

Democratic Deficit in Policy Design

- Consent-gating policies are typically formulated without consulting young users, treating them as passive subjects rather than stakeholders with evolving capacities.

- Example / Case Study: Feedback from youth-led digital rights collectives has highlighted that exclusionary rules erode trust and encourage covert, riskier online behaviour.

- Government Initiative: Participatory governance platforms exist but are rarely leveraged to incorporate adolescent voices into digital regulation.

Conclusion

Parental consent under the DPDP Act, 2023, embodies a protective impulse rooted in legitimate concern for children’s privacy and safety. However, when deployed as a blunt, standalone mechanism, consent-gating risks substituting genuine protection with procedural compliance, often culminating in digital exclusion, especially for girls and marginalised adolescents.

Evidence from child well-being research increasingly suggests that harm arises less from access itself and more from exploitative platform design, unchecked algorithmic amplification, and lack of supportive digital literacy.

A sustainable way forward lies in layered regulation: enforceable duty-of-care obligations on platforms, independent oversight, sustained public investment in longitudinal child well-being research, and participatory policy processes that treat young people as co-creators of safer digital spaces.

Protecting children, ultimately, requires not removing technology from their lives, but cultivating a healthy media ecology where access, safety, and rights advance together rather than at each other’s expense.

Recap: