Ethical and Infrastructural Challenges of Artificial Intelligence in India’s Judicial Processes

Introduction

- Artificial Intelligence (AI) refers to computer systems capable of performing tasks that typically require human intelligence—such as learning, reasoning, language processing, and problem-solving. In the context of the judiciary, AI promises to speed up judicial processes, reduce pendency, and improve accessibility.

- India presently has over five crore pending court cases, and the Ministry of Law & Justice has encouraged AI to alleviate judicial delays. The e-Courts Project Phase III, approved in 2023 with a ₹7,210 crore outlay, envisions AI-based features like intelligent case scheduling and OCR-enabled analytics to enhance efficiency and access.

- However, beyond potential gains, AI also raises serious ethical risks—such as hallucination, bias, privacy breaches—and formidable infrastructural constraints—including connectivity, hardware disparity, digital literacy, and data governance. Balancing these promises and perils demands a nuanced, evidence-backed debate.

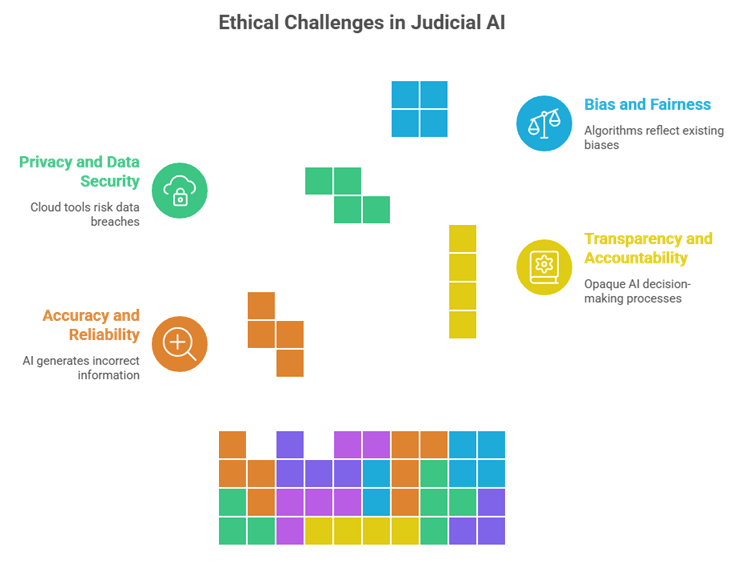

Ethical Challenges

1. Accuracy, Hallucination, and Reliability

- AI Hallucinations & Errors: Tools like OpenAI’s Whisper have been known to hallucinate, e.g., mis-transcribing “Noel” as “no” (Noel vs Guardian case, 2025) and “leave granted” as “chhutti sweekaar” in Hindi—demonstrating error potential in critical legal settings.

- LLM Fabrication Risks: Legal LLMs can invent case laws or wrongly cite precedents—undermining legal reliability and trust.

- Kerala HC Safeguard: Its July 2025 policy prohibits AI use in judicial findings, orders, or judgments; even translations or research must be human-verified, with transparency, fairness, accountability, and confidentiality emphasized.

2. Transparency, Accountability, and Human Oversight

- Opaque Decision-making: AI systems, especially generative models, may lack explainability—jeopardizing accountability in judicial reasoning.

- Kerala HC’s Guidelines: Mandate human supervision, audit trails, reporting errors to IT departments, and training for judicial officers on technical and ethical aspects.

- Ethical Principle Reinforcement: Emphasis on core values—transparency, fairness, accountability—serves to uphold

Notation

Sound Effects

Link Anything

Need Help

judicial legitimacy and responsibility.

3. Privacy, Confidentiality, and Data Security

- Cloud-Based Risks: Generative AI tools (e.g., ChatGPT, Gemini) are typically cloud-based. Uploading case facts or personal data can breach confidentiality and privacy.

- Policy Restrictions: Kerala HC bars use of non-approved cloud tools for case-related work and enjoins only HC/Supreme Court-approved AI tools.

- Data Sensitivity: Without robust data governance or protection law, integrating AI may expose sensitive personal or litigant information to misuse.

4. Bias, Accessibility, and Fairness

- Algorithmic Bias & Precedents: AI legal research tools may reflect search-engine bias—promoting popular rulings and invisibilising lesser-known but vital precedents.

- Equity Concerns: Courts must guard against skewed outcomes due to biased AI algorithms that disadvantage marginalized groups.

- Rights-based Approach Advocacy: Scholars argue for a justice-centred, rights-based framework as India navigates judicial AI deployment.

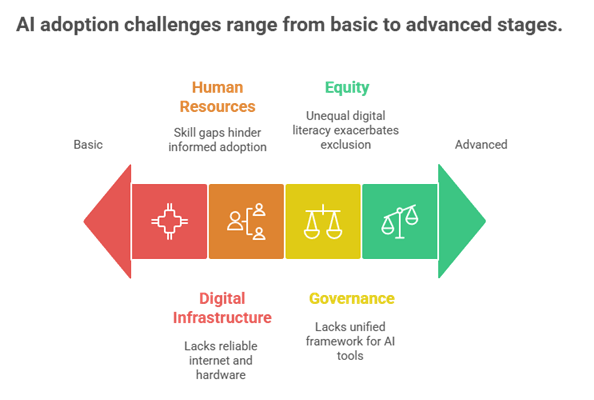

Infrastructural & Institutional Challenges

1. Digital Infrastructure & Connectivity

- Phase III Investment: Allocation for cloud infrastructure, e-filing, connectivity, hardware, video-conferencing, solar backup, etc., underlines recognition of infrastructure needs.

- Persistent Digital Divide: Many rural and lower courts still lack reliable internet, suitable hardware, or power backup—hampering uniform AI deployment.

- Legacy Systems: While Phase III seeks total digitization (including legacy records), transitioning remains a massive logistical and operational task.

2. Human Resources & Capacity Building

- Training Imperative: Kerala’s policy mandates training for judges and staff on ethical and technical aspects of AI use.

- Judicial Academy Role: Judicial academies and bar associations, in collaboration with AI governance experts, are ideal for capacity building.

- Skill Gaps: A lack of technical expertise within judicial staff remains a critical barrier to informed adoption.

3. Governance, Procurement, and Oversight Frameworks

- Need for Standards: Courts lack a unified framework to assess AI tools’ explainability, data handling, risk mitigation, or compliance.

- e-Courts Vision: Phase III’s vision document envisages governance structures, institutional scaffolding, and ecosystem-based adoption—but lacks explicit AI guidelines.

- Kerala’s Pre-Procurement Safeguards: By permitting only approved tools and requiring audits, Kerala starts constructing such oversight mechanisms.

4. Equity, Inclusion, and Accessibility

- Inclusivity Goals: Phase III emphasizes accessibility, inclusion, and ecosystem-based design to serve diverse users (litigants, lawyers, registries, civil society).

- Privacy-Inclusive Design: Draft Vision recommendations call for anonymization, consent-based access to documents, stricter privacy rules for sensitive cases, and grievance mechanisms.

- Digital Literacy Divide: Unequal digital literacy among litigants and staff may exacerbate exclusion unless proactively addressed.

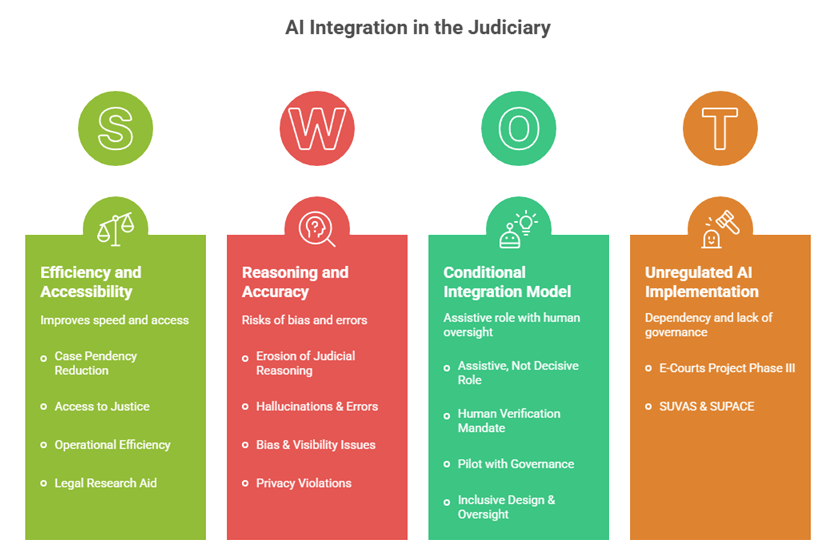

Pros and Cons: A Balanced Debate

For AI Integration

- Case Pendency Reduction: Intelligent systems for scheduling and analytics can help judges manage workloads and reduce backlog.

- Access to Justice: E-filing, virtual courts, e-Sewa Kendras and smart interfaces improve accessibility for litigants.

- Operational Efficiency: Automation of administrative tasks like scheduling, transcription, translation (with verification) can free judicial time.

- Legal Research Aid: AI-powered knowledge graphs and case matching (if accurate) can support legal librarians, researchers, and lawyers.

Against AI Integration

- Erosion of Judicial Reasoning: Over-dependence on AI can lead to mechanical adjudication, undermining nuanced human judgment.

- Hallucinations & Errors: AI can fabricate information and mislead judgments if misused or unchecked.

- Bias & Visibility Issues: AI may invisibilise lesser-known precedents or skew citation patterns.

- Privacy Violations: Unsafe AI use may leak sensitive case data, especially through cloud tools.

Middle Ground: Conditional Integration

- Assistive, Not Decisive Role: AI should support administrative or auxiliary tasks, not replace decision-making.

- Human Verification Mandate: Kerala’s model of requiring verification, audit, and training is a blueprint.

- Pilot with Governance: AI can be piloted with specified time-frames, performance metrics, safe data handling, and sunset clauses to avoid dependency.

- Inclusive Design & Oversight: Ecosystem-based planning, institutional bodies, data governance, grievance mechanisms, and continuous stakeholder engagement are vital.

Examples & Real-world Cases

- Kerala High Court Policy (July 2025): The first judicial policy in India to regulate AI use—prohibiting AI in judgments, mandating human oversight, training, audits, and approved tool usage.

- E-Courts Project Phase III: A ₹7,210 crore national scheme aiming for digital courts, intelligent systems, and inclusion, yet lacking explicit AI governance design.

- SUVAS & SUPACE: Supreme Court initiatives exploring AI for registry efficiency—showing intent to experiment but underscoring absence of overarching AI policy.

Conclusion:

In conclusion, integrating AI into India's judicial processes presents a dual-edged prospect: offering much-needed efficiency, accessibility, and modernization—yet fraught with ethical pitfalls, infrastructural bottlenecks, and risks to justice itself. A balanced stance affirms that AI must remain assistive, not adjudicative; human oversight is indispensable; and institutional, inclusive, and rights-centred frameworks are essential. A balanced, rights-based, ethically guided AI adoption—anchored in robust infrastructure and human judgment—can transform India’s judicial system into a more efficient, equitable, and trusted institution of justice.

Recap: